-

Goread2 - Chapter 2: Deployment Hell

This is the second in a series of posts wherein I attempt to recount the history of Goread2 as it approaches a state in which I might actually try to share it more broadly. The first in the series is here.

GoRead2 worked great on my laptop!

On August 2, fresh off the high of the multi-user transformation, I did what any sensible developer would do when they have a working local app and a Google Cloud account: I tried to put it on the internet. What followed was a masterclass in the specific, humbling genre of suffering that is “deploying a real app for the first time”, a genre characterized by problems that are obvious in retrospect, cryptic in the moment, and deeply embarrassing in the git log forever.

Roll the tape.

Hour 1: Our First Production Security Incident

We commited the multi-user stuff at 4:40pm. 38 minutes later in the git log, we made a second commit with the subject line

SECURITY: Remove foo.env from repository and add to .gitignore.The commit message explained, with the kind of formal dignity one adopts when hoping nobody notices:Remove accidentally committed environment file containing OAuth secrets and add it to .gitignore to prevent future commits.Even better: the file was called

foo.env. It contained OAuth credentials. It had just been pushed to a public GitHub repository. Tell me you are not a professional software developer without telling me? And I hadn’t even tried to deploy anything yet!The rest of August 2 was spent fighting the CI pipeline: golangci-lint didn’t like the version specification, then GitHub Actions didn’t support Go 1.23, then after downgrading to Go 1.22 the OAuth library had compatibility issues, then after downgrading the OAuth library the security scanner complained, then the security scanner itself turned out to use a deprecated action, then (and this is my favorite part) the solution to the overly aggressive security scanner was a commit titled

Disable security scanning job to resolve excessive govulncheck errors.We had committed actual secrets to a public repo less than an hour ago, and now I was disabling the security scanner. Tremendous.Day 2: Fun With Authentication

August 3 was the day I actually tried to log in to GoRead2 running on App Engine. The first result was a commit called

Fix auth callback 500 error by implementing Datastore user management, which is a polite way of saying: when you clicked “Sign in with Google,” the app crashed. The OAuth flow completed successfully, Google redirected back to the app, and then the app threw an internal server error because we hadn’t actually implemented the part where it saves the user to the database.This is a relatable oversight, right? You build an auth system, you test the middleware, you write the session management, and then you forget to handle the moment when a new user shows up for the first time. Classic amateur hour over here.

Fixed that. Tried to add a feed. The feed appeared in the sidebar. Clicked on it — no articles. Commited

Fix missing articles by adding subscription verification to GetUserFeedArticles. Okay. Now there were articles. Clicked on an article. The browser console showed:TypeError: this.feeds.forEach is not a function.The API was returning feeds. The frontend was receiving them but it was simply declining to do anything with them, because the response format had changed in the multi-user refactor and the JavaScript hadn’t caught up. Commited

Fix TypeError: this.feeds.forEach is not a function. Fine.Now, while debugging all of this, another look at

app.yamlrevealed: the OAuth client secret was hardcoded in the config file. Again. Not the credentials themselves this time, just the environment variable syntax${GOOGLE_CLIENT_SECRET}, which App Engine doesn’t expand — but still, essentially a repeat mistake.This is where the commit messages turned into poetry:

More secret leakage. C'mon, Claude. (I asked Claude to review this article and its accusations, and it replied in its inimitable understated voice, “This one is addressed to me, and I find it reasonable.”)The fix was to use App Engine’s Secret Manager integration syntax, where instead of an environment variable you write

_secret:projects/PROJECT_ID/secrets/SECRET_NAME/versions/latest. This was duly committed asFixing this secret leakage once and for all.Immediately followed by

Aaaaand the human makes a typo. The typo?{$GOOGLE_CLIENT_SECRET}instead of${GOOGLE_CLIENT_SECRET}. Do you see it? It took me a few minutes.A commit titled

We will get this right somedayswitched to a shorter_secret:google-client-idformat. Another titledMaybe I should have let Claude do this after alladded a missingGOOGLE_REDIRECT_URLthat had been omitted entirely. Are we having fun yet?Day 3: Two Days of OAuth Debugging, Compressed

August 4 was dedicated to the question: why is the OAuth client ID coming through as an empty string in production?

The answer was another typo in

app.yaml. The Secret Manager path for the client secret was_secret:project/goread-467200/secrets/.., missing an “s”, it should have been projects. Are we loving computer programming, or what? The commit that fixed it was titled, with admirable restraint:Fix typo in app.yaml: project -> projects for GOOGLE_CLIENT_SECRET.But we didn’t know that yet. What we knew was: OAuth was broken, and we didn’t know why. The approach to solving that problem was adding logging. Like, a LOT of logging. A few of the git log greatest hits, all from August 4:

Add OAuth environment variable debugging.Print actual OAuth environment variable values.Add comprehensive startup debugging logs.More debug tweakery.Make OAuth environment variable debugging more explicit for empty values.Simplify OAuth debugging with step-by-step logging.Add GOOGLE_CLOUD_PROJECT debugging to verify project ID.

Yes, seven commits over the course of one day, each one adding more

printstatements to try to see what App Engine was actually loading for those environment variables. Each one requiring a full deployment to App Engine to test, because the Secret Manager integration only works in production. You cannot easily reproduce “App Engine’s Secret Manager is silently ignoring a malformed path” locally.On August 5, we finally found the path typo and fixed it. OAuth flow worked. We removed debug logging. Commit titles became less combative:

Verbiage tweakage.Then a couple of linter fixes. ThenFix GitHub Actions tests by running unit tests in short mode, which addressed the fact that the integration tests took too long to run in CI and were timing out.And finally, GoRead2 was live.

Looking back at those 44 commits over four days, the striking thing isn’t that any individual problem was that hard. None of them were. A missing “s” in a config path, a transposed dollar sign, a response format mismatch, an overlooked

foo.env. Each one was the kind of thing that takes thirty seconds to fix once you know what it is. The hard part was knowing what it was — staring at a 500 error from an App Engine log with no local reproduction path, trying to reason backward from “OAuth client ID is empty” to “you misspelled ‘projects’, you moron.”I suppose there’s a tax you pay the first time you deploy any app: the gap between what you’ve built and what you’ve deployed turns out to contain some unpredictable number of small decisions you made incorrectly. You try to pay it all at once, over a frantic long weekend, and then you are done. The app works. The secrets are where they should be. The CI pipeline is green.

And you quietly update the

.gitignoreand move on.Next up: The First Bill Arrives.

-

Goread2 - Chapter 1: The Beginning

This is the first in a series of posts wherein I attempt to recount the history of Goread2 as it approaches a state in which I might actually try to share it more broadly.

I’ve long missed Google Reader, which Google killed in 2013. Over the years, I tried both Feedly and Reeder and was left wanting and/or overwhelmed by product management run amok. I was aware of an open source project called GoRead which attempted to recreate the Google Reader UX; but it had fallen by the wayside and was no longer maintained.

Early in 2025, at work event, I saw a demonstration of an AI-powered coding agent that looked intriguing. When Claude Sonnet 4 was released in May, suddenly “promising” switched to “I need to learn as much as I can about this as fast as I can”. I became a regular Claude Code user and my Claude Pro subscription shortly followed.

By July, I was using Claude Code often enough that I thought resurrecting GoRead might be an interesting side project. At first, I thought I could clone and modernize the GoRead project; but Claude talked me out of it and we started from scratch.

And yeah, when I say “we” in these posts, I’m referring to Claude Code being prompted by me. It feels just as weird to write as it does to read.

We made the first commit on July 26. It was 15 files and about 2,500 lines of code: a Go backend using the Gin web framework, a vanilla JavaScript frontend, an HTML template for the layout, and database support for both SQLite (for running locally) and Google Cloud Datastore (for eventual deployment on App Engine). The

Databaseinterface that lets the same code talk to either backend was in this first commit, because App Engine deployment was never an afterthought. From the start, I wanted this to be a real, running thing on the internet and I wanted to see if I could use Claude Code to build an actual product.That said, at first the data model was simple. A

Feedhad a URL, a title, a description, and timestamps tracking when it was created and last fetched. AnArticlehad a feed ID, a URL, a title, content, and an author. TheDatabaseinterface had nine methods —AddFeed,GetFeeds,DeleteFeed,AddArticle,GetArticles,GetAllArticles,MarkRead,ToggleStar,UpdateFeedLastFetch— and that was it. Notably absent from all of this: any concept of a user. So that wasn’t going to scale.On August 2, we added multiuser support in this commit. The diff was 28 files and over 4,000 new lines of code. Everything that was implicit in the single-user design had to be made explicit: which user’s feeds are these? Which user starred this article? Who is allowed to see what? We added a Google OAuth 2.0 authentication flow, along with HTTP-only session cookies, session management, and auth middleware wrapping every API endpoint. The

Databaseinterface grew from 9 methods to nearly 40.user_feedsanduser_articlesjoin tables appeared, giving each user their own independent view of the world. We also implemented the first pass at a test suite: unit tests for the database layer, auth system, and feed service; and integration tests verifying that one user could not see another user’s data.Looking at the two commits side by side, what strikes me is how much architectural decision-making was packed into that six-day gap. The dual-database abstraction that was already there made adding multi-user support much easier because the same interface that swapped SQLite for Datastore could absorb the new user-scoped methods without breaking the shape of the code. The decision to build for App Engine from day one meant the deployment target was already understood when we made the authentication choices.

I’m also glad that we didn’t skimp on the tests at this stage. Obviously once you have multiple users, every piece of data needs an owner, and the places where you forgot to check ownership become security bugs rather than minor annoyances. I felt that integration tests, particularly the ones verifying user data isolation, were necessary even at this early stage.

By mid-August, GoRead2 had gone from a personal weekend project to something that could maybe possibly be used by strangers. The next step was to actually make it available on Google Cloud. That’s when things started getting complicated.

Next up: Deployment Hell.

-

2025 Year In Review

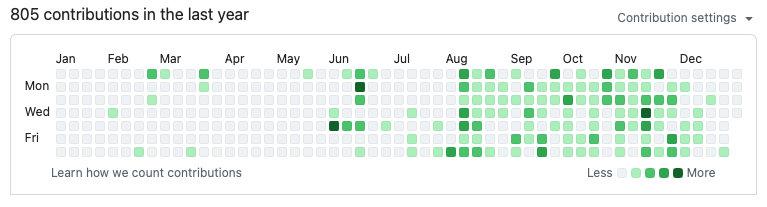

In 2025, I purchased a Claude Pro subscription. Guess when?

With tons of help from my new friend Claude Code, I started (because you never really complete) some fun projects in 2025:

- I created a Video Poker game that updates winning hand probabilities in real time as you select cards. I threw this together in a hotel room in Vegas in under an hour out of nothing but curiosity.

- At work, we spent a lot of time dealing with construction cranes appearing unexpectedly in our airspace. I thought, there has to be a data source somewhere I can use to automate this. It turns out there are three: 1) the FAA data object files, 2) the FAA OE/AAA database, and 3) NOTAMs. I built a website that lets you search for registered obstacles within some nautical miles of a U.S. address. Turns out most of the data sources are unreliable (especially during federal government shutdowns) but building the site and automating daily data collection and site builds was a fun exercise.

- I really miss Google Reader, and after trying both Feedly and Reeder, I was left wanting. After Google killed Reader, someone resurrected it for awhile as an open source project, but it hadn’t been maintained. I pointed Claude at that person’s code and added bells and whistles, and I now use it exclusively for my daily RSS reading needs. See GoRead2. I’m currently the only customer/user, but it is fully integrated with Stripe and supports a monthly subscription so in theory others could use it, too. I’m not exactly keeping it secret but it has not had a proper alpha/beta test so there are likely bugs to be found.

Those were the big ones. As for other major time savers with Claude and friends, I don’t write scripts anymore or spend much time caring about what data I need to extract from a database. I just

select * from table_name, download everything as a CSV file, and let Claude Code figure it out.Not sure what to expect in 2026, but happy new year to anyone out there reading this.

-

About This Website

For somebody who posts once or twice per year, I’ve spent an inordinate amount of my time setting up this website:

- I have no interest in running my own Wordpress instance or anything like that so I use Jekyll.

- In the rare case when I write a new post (like today!), it gets checked into a Github repository.

- The Github repo contains an action that uploads the website content to an S3 bucket upon every commit.

- The domain is registered in Route53 and configured to serve static content from said S3 bucket. No webserver required.

- In close partnership with my dear friend and always reliable intern Claude Code, I created my own Jekyll theme that I can modify however/whenever I want.

- I use the lovely Jekyll Remote Theme plugin to avoid the hassle of creating gemfiles.

I also do most of my hacking on my iPad. I use the free Terminus app to ssh into an EC2 instance where I can pull/push to/from Github, and the free version of the app supports port forwarding so I can test changes on the iPadOS Safari. It works fine for the vast majority of changes–the only time I need to use a “real” computer are those unusual occasions when I need access to the browser console.

-

Fun With Family History

My great-great grandfather was born in 1850 and moved to Seattle from Kansas in the mid-1880’s. I’m not sure why he moved to Seattle, but I do know that my great-great grandmother died in 1885 at age 32 and is buried in Kansas. I also know that the 1889 Washington State Census lists him living in Seattle’s Second Ward with a “housewife” named Mattie, age 21.

In most records, his name appears as P.J. Pratt. His occupation was “teamster” but he shows up most frequently in the Post-Intelligencer buying and selling plots of real estate. As one example, he bought lot 14, block 28 in the Eden & Knights tract on December 1, 1890 for $500 from C.H. Pierce. And he sold the same lot on February 7, 1893 for $3000 to A.H. Turner. I think that lot is at the corner of E Cherry St and 19th Ave (i.e., worth considerably more today).

In 1890, P.J. hired a contractor to build a house for his growing family on property he owned at 506 20th Ave. He agreed to pay $225 for labor and furnish the materials, and the contractor C.A. Dobson agreed to build a six-room cottage. While the house was being built, the family took up residence in a smaller structure on the same lot.

Seattle apparently did not keep weather records until the 1890’s, but one can easily imagine Saturday, June 7, 1890 being a typical Seattle June day—high temperature in the mid-60’s, cloudy with an occasional sunbreak, and a long early summer evening with sunset after 9 PM.

On this Saturday around 5:30 PM, P.J. went to the house and asked Dobson to give him the key, as P.J. wanted to work on the house himself over the weekend. Dobson refused, because Pratt had not yet paid for the house. I could not possibly describe what happened next better than the P-I:

“Dobson refused to give up the key, and Pratt cursed him. Dobson started to walk away. Pratt followed, and began beating Dobson, who fell to the ground. (A witness) pulled Pratt off. When Pratt rolled over, it was found that Dobson was dead.”

My great-great grandfather was charged with murdering Dobson. His case was number 118 in the new King County Superior Court. The trial was held in August and September. To defend himself, P.J. lawyered up, hiring J.T. Ronald (who would later serve as Mayor of Seattle 1892-1894) and Samuel Piles (who would later serve as a U.S. Senator 1905-1911).

I have the court records and sadly there is no transcript, so I’ll never really know how P.J. managed to be acquitted. In his obituary, it says he claimed self defense.

On his deathbed in 1897, he made a statement that was published in the P-I. “I am sure,” he said, “that none of the blows I struck Mr. Dobson were instrumental in causing his death…The blows that I struck were nothing more than would be given in any ordinary fight.” He went on to say Dobson died when he fell and hit his head on a pointed tree stump.

P.J. is buried in an unmarked grave at Lake View Cemetery. Last time I was in the neighborhood, his house was still standing–expanded from original form and now a small apartment building. I wonder if anyone who lives there knows its history? And my family has now lived in Seattle for five generations and counting.

-

So Whatcha Want?

I’ve shared this advice with a couple of people in the last few days and thought it worth writing down.

It is very easy to identify the things you don’t want from your job. These things tend to be obvious. You probably don’t want to work with assholes, for example. You probably wish there was less chaos, and more order. And when you begin thinking this way, it is very easy to start believing that the grass is greener and a job change will solve all of your problems.

I think this is a mistake.

It is very difficult to identify the things you do want from your job. These things are rarely obvious. But you have to identify those things before you can know if 1) your current job isn’t going to work for you, and/or 2) if any particular job is going to work for you.

You have to put a fair amount of energy into thinking about the things you want! Some things are easy. You want to get paid, of course, and maybe you have geographic limitations. It gets trickier. Maybe you want to work with a particular set of technologies, or programming paradigms. Maybe you have opinions about the sort of product(s) you are willing to work on.

Once you know what you want, then–and only then–can you know if you are making the right decision.

Put another way, someone told me once that you should never quit your job after you’ve had a bad day. If you have a good–or even a normal–day, and you still want to leave, you know you are making the right decision.

-

Our Modern World

Of all the conveniences in our modern world, the ability to deposit a check using my iPhone camera might be the most amazing. Remember having to actually go to a bank to make a deposit?

Now if we could just move past paper checks altogether…

-

Hello World

Over the years, I’ve tried many different flavors of personal website, from LiveJournal to Wordpress to lovingly hand-crafted HTML to my own weirdo hand-rolled “convert markdown to HTML using a Makefile and some shell scripts” solution.

Seems like GitHub pages are the latest craze, so I thought I’d give it a whirl and see what happens.Update: Yeah, that was fun. GitHub pages are all fine and dandy but I can just as easily roll my own. Jekyll generates a static website–so it doesn’t really matter whether I use GitHub pages or just upload it to the S3 bucket I’ve been using for eons via a GitHub workflow.